I was performing another round of performance load-testing for a customer in Singapore last week. The target was to achieve 10,000 concurrent hit per second (yes, it is a tall order) and the load must be sustained for 1 hour.

The setup was a pretty old version

OpenSSO 8 Update 2 Patch 4. The embedded OpenDS was even older. It was still

OpenDS 1.0.0. Why no upgrade? Well, better dun ask. Political lah.

We have, in fact, performed many rounds of tuning - OS kernel, TCP stack, Web Servers (reverse proxy), Application Servers, JVM options - prior to last week's activity.

We were almost there, except failing for 1-2 secs at times. Spikes kept coming after 15-20 mins; went away; came back again 10-15 mins later ...

So during one of the spikes, I executed a thread dump. And the following was observed:

At that point of time, we had 12 x OpenSSO servers running, with embedded OpenDS. Me bad, I know. Lazy bum me!

No choice, I made a decision to switch out from 12 x embedded OpenDS to 3 x external Sun Directory Server 7. Political again, dun ask me why you never this.. never that.. :)

Immediately, we could see the effect. The NLWP (Number of Light-Weight Processes) decreased from high-300 to mid-200 upon application servers restart. Pretty good improvement.

Subsequent load-testings also yield better result with less spikes. The NLWP never increased beyond 650 at peak (previously it could easily reached 900+).

At least, the graphs looked flatter.

We are now on our last mile... I think the reverse-proxy servers are over-loaded. Adding more hardware at the moment... Hopefully, we can achieve the expected result in a week or 2!

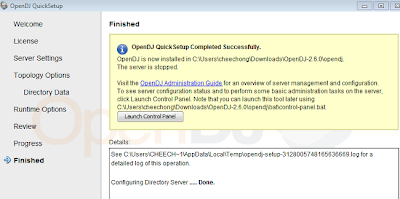

PS: My friends at ForgeRock also confirm that a deployment of >4 OpenAM instances with external OpenDJ as the configuration store yields better performance. It is largely due to the meshed replication setup. Also, using the latest version of OpenDJ (2.6.0 at the moment) will help as well. OpenDS is far too old. :)

.